Scrutiny 9 Troubleshooting and FAQs

What could be wrong?

If your scan or results didn't turn out as expected, see whether the answer is here.

Crawl finishes with only one link reported

A quick test - switch off javascript and cookies in your browser, then try to reload your page. If you don't see your web page as expected, then your website is requiring one or both of these things to be enabled. These options are under the settings and options for your site, under the Advanced tab.

The first thing to try is to switch the user-agent string to Googlebot (This is the first item in Preferences, first tab, you should be able to select googlebot from the drop-down list). If that doesn't work, switch to one of the 'real' browser user-agent strings, ie Safari or Firefox.

Scrutiny now has a tool to help diagnose this failure to get off the ground. It may anticipate the problem and offer you the diagnostic window after the attempted crawl. If you declined that offer or didn't see it, you can still access the tool from the Tools menu, 'Detailed analysis of starting url. (This tool is available from the menu whether or not the crawl was successful). It shows many things, including a browser window loaded with the page that Scrutiny received, the html code itself, and details of the request / response.

Pages time out / the web server stops responding / 509 / 429 / 999 status codes

This isn't uncommon. Some servers will respond to many simultaneous requests, but some will have trouble coping, or may deliberately stop responding if being bombarded from the same IP.

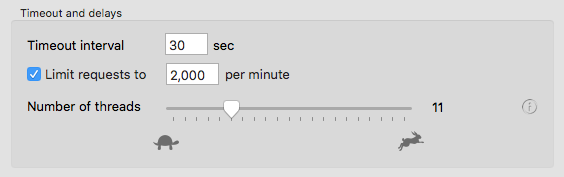

The solution used to involved introducing a delay. Since version 8, Scrutiny handles this much more elegantly. There's now a control above the threads slider allowing you to specify a maximum number of requests per minute.

You don't need to do any maths; it's not 'per thread'. Scrutiny will calculate things according to the number of threads you've set (and using a few threads will help to keep things running smoothly). It will reduce the number of threads if appropriate for your specified maximum requests.

If your server is simply being slow to respond, you can increase the timeout.

The 999 is specific to LinkedIn as far as we're aware and they seem to be quite successful at blocking automated checkers and bots. The only sensible way to stop those codes if you really don't want them in your results is to set up a rule to ignore or not check them.

Scrutiny appears to be crawling many more pages than exist / scanning pages without getting closer to completion

There are a few reasons why Scrutiny might be in a loop. The most likely is that there's some kind of session id or tracking id in the querystring, making every url appear unique, even repeated visits to the same page. This is likely with forums / discussion boards. The easy solution to this is to use the 'ignore querystrings' setting.

If you have to allow querystrings because there's a page id in there, then Scrutiny has the option to ignore only the session id (or another single parameter). See the 'Advanced' tab

404s or other errors are reported for links that appear fine in a browser

This can happen with certain servers where both http:// links and https:// links appear on the site. It appears that some servers don't like rapid requests for both http and https urls. Try starting at a https:// url and blacklisting http:// links (make a rule 'do not check urls containing http://') and see whether the https:// links then return the correct code.

It's also worth changing the user-agent string in Preferences, servers can sometimes respond differently to a UA string which isn't a recognised browser, although version 8 automatically does a certain amount of re-trying with alternative settings

Also see the question below about certain social networking sites

A link to [a social networking site ie Youtube, Facebook] is reported as a bad link or an error in Scrutiny, but the link works fine in my browser?

In your browser, log out of the site in question, then visit the link. You'll then be seeing the same page that Scrutiny sees because, by default, it doesn't attempt to authenticate.

If you see a page that says something like 'you need to be logged in to see this content' then this is the answer. It's debatable whether a site should return a 404 if the page is asking you to log in, but that should be taken up with the site in question.

You have several options. You could switch on authentication & cookies in Scrutiny (and log in using the button to the right of those checkboxes). You could set up a rule so that Scrutiny does not check these links, or you could change your profile on the social networking site so that the content is visible to everyone.

If the problem is LinkedIn links giving status 999, then this is a different problem, LinkedIn is detecting the automated requests and is sending the 999 codes in protest. The only way to avoid this (as far as I know) is to seriously throttle Scrutiny (see "pages time out / the web server stops responding" above), but this will seriously slow your scan, and so it may be preferable to set up a rule to ignore the LinkedIn links

Limitations

If your site is a larger site then the memory use and demand on the processor and HD (virtual memory) will increase as the lists of pages crawled and links checked get longer.

Scrutiny has become much more efficient over the last couple of versions, and computer capacities have grown, but if the site is large enough (millions of links) then the app will eventually run out of resources and obviously can't continue.

- Make sure Scrutiny isn't going into a loop or crawling the same page multiple times because of a session id or date in a querystring - you can switch off querystrings in the settings, but make sure that content that you want to crawl isn't controlled by information in the querystring (eg a page id)

- See if you're crawling unnecessary pages, such as a messageboard. To a crawling tool, a well-used messageboard can look like tens of thousands of unique pages and it will try to list and check all of those pages. Again, you can exclude these pages by blacklisting part of the url or querystring or ignoring querystrings.

- You can crawl the site in parts. You can do this by scanning by subdomain, scanning by directory or using blacklisting or whitelisting.

Tips:

If you start within a subdomain (eg engineering.mysite.com ) the scan will be limited to that subdomain if the setting 'consider subdomains of the root domain internal' is switched off

If you start within a 'directory' (eg mysite.com/engineering ) the scan will be limited to that directory

if you create a whitelist rule which says 'only follow links containing /manual/ the scan will be limited to urls which contain that fragment.

I use Google advertising on my pages and don't want hits on these ads from my IP address

The Google Adsense code on your page is just a snippet of javascript and doesn't contain the adverts or the links. When a browser loads the page, it runs the javascript and the ads are then pulled in. Scrutiny doesn't run javascript by default (double-check that the Render page (run javascript) option is turned off) so it won't see any ads or find the links within them.

A link that's shown as "www.mysite.com/../page.html" is reported as an error but when I click it in the browser it works perfectly well

Sometimes a link is written in the html as '../mypage.html'. The ../ means that the page is to be found in the directory above, which is fine as long as the link is deep in the site. If it appears on a top-level page in that form, then it's technically incorrect because no-one should have access to the directory above your domain. Browsers tend to tolerate this and assume the link is supposed to point to the root of your site. By default, Scrutiny does not make this assumption and reports the error. Since v6.8.1 there is a preference to "tolerate ../ that travels above the domain" (General tab)

A link that uses non-ascii or unicode characters is reported as an error but when I click it in the browser it works perfectly well

Scrutiny now handles non-ascii characters in urls.

Internationalized Domain Names (IDN) are now supported in Scrutiny, it uses the standard method of punycode encoding / decoding in order to handle this. Note that it's possible to make an IDN using 'lookalike' characters (homograph attack / script spoofing). Browsers have different ways to defend / protect against this and this may account for a difference between using a link in a browser and Scrutiny's result.

Note that 'unicode normalisation' is a system of replacing or considering equivalent certain similar characters with their more common equivalents. By default this option is switched on in Scrutiny (Preferences > Links > Advanced). A link that behaves differently in a browser and in Scrutiny (especially if it begins to work with the normalisation switched off in Scrutiny) may be a sign that there's something suspicious about your link url.

What do the red and orange colours mean in the list?

To check a link, Scrutiny sends a request and receives a status code back from your server (200, 404, whatever).

The 'status' column tells you the code. 200 codes means that the link is good, 300 means there's something that you may need to know about (usually a redirection) but the link still works, 400 codes mean that the link is bad and the page can't be accessed and 500 codes mean some kind of error with the server. So the higher the number, the more concern about the error. Scrutiny colours these (by default) white, orange and red.

If you don't consider a redirection a problem, then you can switch the orange colour off in Preferences (Links tab). You can also choose different colours or even turn off this colouring completely in Preferences (General tab)

(There's a full list of all the possible status codes here: http://en.wikipedia.org/wiki/List_of_HTTP_status_codes) but Scrutiny does helpfully give you a description of the status as well as the code number.

a 200 is shown for a link where the server doesn't exist

Your provider may be recognising the fact and inserting a page of their own (possibly with a search box and some ads which benefit them financially) and returning a 200 code. They call this a helpful service, but it's unhelpful to us when we're trying to find bad links.

You may be able to ask your service provider to turn this behaviour off (either via a page on their website or by contacting them). Failing that you can use the 'soft 404' feature to raise a problem for such urls. There is a longer explanation of this problem and solution here.

It crashes

This is rare, as far as we're aware, and when it does happen we really would like to know. Please use this form to send some details to help us.

The details within the crash report may be helpful, please send that if possible. Even more important than the report itself is exactly what we need to do to experience the same problem.

Disc space is eaten while Scrutiny runs

This should only happen for very large sites, and since version 6, Integrity and Scrutiny will be much less resource-hungry. Here are some measures to make Scrutiny more efficient.

Go to the settings for your site, Options tab, there are four checkboxes which are labeled 'these options may have a serious impact on resources' - uncheck them if you can, particularly grammar checking and keyword analysis.

Make sure that the javascript option is switched off. This should only be used in very rare circumstances where page content including links are generated by javascript. It's in your site's settings on the Advanced tab ('Render page (run javascript)')

Also uncheck Settings > Options > Archive pages while crawling and Preferences > SEO > Count occurrences of keywords in content. If either of these boxes are checked, Scrutiny necessarily caches the page content. Depending on the size and number of your pages this can mean a significant amount of space. Unless you save the archive after the scan, this cache will be deleted when you quit or failing that, when you start the next scan.

How do I crawl my Wix site

Wix's reliance on javascript / AJAX / Flash makes it really hard for web crawlers (and anyone not using a regular up-to-date browser with js enabled). It's not recommended as a way to make an accessible and search-engine optimised website. If you do need to scan a Wix site, Scrutiny should now detect a Wix site and take the necessary measures to be able to crawl it properly.